Stories Data SpeakJekyll2024-07-30T04:20:17+00:00https://duttashi.github.io/Ashish Dutthttps://duttashi.github.io/ashishdutt@yahoo.com.my<![CDATA[]]>https://duttashi.github.io/2019-08-11-how-to-upload-external-data-in-aws-s3-read-and-analyze-it2024-07-30T04:20:17+00:002024-07-30T04:20:17+00:00Ashish Dutthttps://duttashi.github.ioashishdutt@yahoo.com.my<h3 id="introduction">Introduction</h3>

<p>Recently, I stepped into the AWS ecosystem to learn and explore its capabilities. I’m documenting my experiences in these series of posts. Hopefully, they will serve as a reference point to me in future or for anyone else following this path. The objective of this post is, to understand how to create a data pipeline. Read on to see how I did it. Certainly, there can be much more efficient ways, and I hope to find them too. If you know such better method’s, please suggest them in the <code class="language-plaintext highlighter-rouge">comments</code> section.</p>

<h4 id="how-to-upload-external-data-in-amazon-aws-s3">How to upload external data in Amazon AWS S3</h4>

<p><strong>Step 1</strong>: In the AWS S3 user management console, click on your bucket name.</p>

<p><img src="https://duttashi.github.io/images/s3-1.PNG" alt="plot1" /></p>

<p><strong>Step 2:</strong> Use the upload tab to upload external data into your bucket.</p>

<p><img src="https://duttashi.github.io/images/s3-2.PNG" alt="plot2" /></p>

<p><strong>Step 3:</strong> Once the data is uploaded, click on it. In the <code class="language-plaintext highlighter-rouge">Overview</code> tab, at the bottom of the page you’ll see, <code class="language-plaintext highlighter-rouge">Object Url</code>. Copy this url and paste it in notepad.</p>

<p><img src="https://duttashi.github.io/images/s3-3.PNG" alt="plot3" /></p>

<p><strong>Step 4:</strong></p>

<p>Now click on the <code class="language-plaintext highlighter-rouge">Permissions</code> tab.</p>

<p>Under the section, <code class="language-plaintext highlighter-rouge">Public access</code>, click on the radio button <code class="language-plaintext highlighter-rouge">Everyone</code>. It will open up a window.</p>

<p>Put a checkmark on <code class="language-plaintext highlighter-rouge">Read object permissions</code> in <code class="language-plaintext highlighter-rouge">Access to this objects ACL</code>. This will give access to reading the data from the given object url.</p>

<p>Note: Do not give <em>write object permission access</em>. Also, if read access is not given then the data cannot be read by Sagemaker</p>

<p><img src="https://duttashi.github.io/images/s3-4.PNG" alt="plot4" /></p>

<h3 id="aws-sagemaker-for-consuming-s3-data">AWS Sagemaker for consuming S3 data</h3>

<p><strong>Step 5</strong></p>

<ul>

<li>

<p>Open <code class="language-plaintext highlighter-rouge">AWS Sagemaker</code>.</p>

</li>

<li>

<p>From the Sagemaker dashboard, click on the button <code class="language-plaintext highlighter-rouge">create a notebook instance</code>. I have already created one as shown below.</p>

</li>

</ul>

<p><img src="https://duttashi.github.io/images/s3-5.PNG" alt="plot5" /></p>

<ul>

<li>click on <code class="language-plaintext highlighter-rouge">Open Jupyter</code> tab</li>

</ul>

<p><strong>Step 6</strong></p>

<ul>

<li>In Sagemaker Jupyter notebook interface, click on the <code class="language-plaintext highlighter-rouge">New</code> tab (see screenshot) and choose the programming environment of your choice.</li>

</ul>

<p><img src="https://duttashi.github.io/images/sagemaker-1.PNG" alt="plot6" /></p>

<p><strong>Step 7</strong></p>

<ul>

<li>Read the data in the programming environment. I have chosen <code class="language-plaintext highlighter-rouge">R</code> in step 6.</li>

</ul>

<p><img src="https://duttashi.github.io/images/sagemaker-2.PNG" alt="plot7" /></p>

<h3 id="accessing-data-in-s3-bucket-with-python">Accessing data in S3 bucket with python</h3>

<p>There are two methods to access the data file;</p>

<ol>

<li>The Client method</li>

<li>The Object URL method</li>

</ol>

<p>See this <a href="https://github.com/duttashi/functions/blob/master/scripts/python/accessing%20data%20in%20s3%20bucket%20with%20python.ipynb">IPython notebook</a> for details.</p>

<p><strong>AWS Data pipeline</strong></p>

<p>To build an AWS Data pipeline, following steps need to be followed;</p>

<ul>

<li>Ensure the user has the required <code class="language-plaintext highlighter-rouge">IAM Roles</code>. See this <a href="https://docs.aws.amazon.com/datapipeline/latest/DeveloperGuide/dp-get-setup.html">AWS documentation</a></li>

<li>

<p>To use AWS Data Pipeline, you create a pipeline definition that specifies the business logic for your data processing. A typical pipeline definition consists of <a href="https://docs.aws.amazon.com/datapipeline/latest/DeveloperGuide/dp-concepts-activities.html">activities</a> that define the work to perform, <a href="https://docs.aws.amazon.com/datapipeline/latest/DeveloperGuide/dp-concepts-datanodes.html">data nodes</a> that define the location and type of input and output data, and a <a href="https://docs.aws.amazon.com/datapipeline/latest/DeveloperGuide/dp-concepts-schedules.html">schedule</a> that determines when the activities are performed.</p>

</li>

<li></li>

</ul>

<![CDATA[Risky loan applicants data analysis case study]]>https://duttashi.github.io/blog/to_lend_funds_or_not2023-05-02T00:00:00+00:002023-05-02T00:00:00+00:00Ashish Dutthttps://duttashi.github.ioashishdutt@yahoo.com.myblog<p>The following data analysis is based on a publicly available dataset hosted at <a href="https://www.kaggle.com/search?q=lending+club+loan+data+in%3Adatasets">Kaggle</a>. The complete code is located on my <a href="https://github.com/duttashi/scrapers/blob/master/AT%26T_round2_data_analysis.py">github</a></p>

<h5 id="exploratory-data-analysis">EXPLORATORY DATA ANALYSIS</h5>

<ul>

<li>The dataset is a single csv file. It has a shape of 42,542 observations in 144 variables.

<ul>

<li>The response or dependent variable is “loan_status” and is categorical in nature.</li>

</ul>

</li>

<li>Off the 144 variables, majority of them (~110) are continuous in nature and rest are categorical data types.</li>

<li>All 144 variables have missing values.

- Variables with 80% missing data were removed. The dataset size reduced to 54 variables.</li>

<li>Correlation treatment helped reduce dataset size to 45 variables. Turns out, independent variables such as <code class="language-plaintext highlighter-rouge">funded amount</code>, <code class="language-plaintext highlighter-rouge">funded amount inv</code>, <code class="language-plaintext highlighter-rouge">installment</code>, <code class="language-plaintext highlighter-rouge">total payment</code>, <code class="language-plaintext highlighter-rouge">total payment inv</code>, <code class="language-plaintext highlighter-rouge">total rec prncp</code>, <code class="language-plaintext highlighter-rouge">total rec int</code>, <code class="language-plaintext highlighter-rouge">collection recovery fee</code> and <code class="language-plaintext highlighter-rouge">pub rec bankruptcies</code> are strongly correlated (>=80%) with the dependent variable.</li>

<li>By this stage, the dataset shape is 42,542 observations in 45 variables (25 continuous, 3 datetime, and 17 categorical).</li>

<li>The dependent variable has 4 factor levels. I recoded the 4 factor levels to 2 as asked by the assignment.

<ul>

<li>34116 observations for loans that were fully paid</li>

<li>8426 observations for loans that were charged off</li>

</ul>

</li>

<li>The dependent variable was label encoded to make it suitable for model building. As earlier stated, it’s now a binary categorical variable with two levels. Label <code class="language-plaintext highlighter-rouge">1</code> refers to <code class="language-plaintext highlighter-rouge">Fully Paid</code> and Label <code class="language-plaintext highlighter-rouge">0</code> refers to <code class="language-plaintext highlighter-rouge">Charged Off</code>.</li>

<li>It should be noted, the dependent variable is imbalanced in nature. This means, data balancing method need to be applied for building a robust model.</li>

</ul>

<h5 id="visuals">VISUALS</h5>

<ul>

<li>A histogram comparing the annual income of applicants from the states of West Virginia (WV) and New Mexico (NM). Is there any relationship here?</li>

</ul>

<p><img src="https://duttashi.github.io/images/casestudy_2023_april_1.png" alt="hist1" /></p>

<p>Fig-1: Average annual income of applicants from WV and NM</p>

<ul>

<li>The top Top 3 states with highest number of loan defaults are <code class="language-plaintext highlighter-rouge">California (CA)</code>, <code class="language-plaintext highlighter-rouge">New York (NY)</code>and <code class="language-plaintext highlighter-rouge">Texas (TX)</code>.</li>

</ul>

<p><img src="https://duttashi.github.io/images/casestudy_2023_april_2.png" alt="bar1" /></p>

<p>Fig-2: Top 3 states with highest loan defaults</p>

<h5 id="data-sampling">DATA SAMPLING</h5>

<ul>

<li>To build a classifier model, I took following steps,

<ul>

<li>Data shape at this stage was <code class="language-plaintext highlighter-rouge">(42542, 45)</code>.</li>

<li>Took a 0.05% random sample of the dataset for further analysis.</li>

<li>Data shape of sample size was <code class="language-plaintext highlighter-rouge">(2127, 45)</code>.</li>

<li>The reason I took a sample of the original dataset was the presence of several categorical variables with factor levels greater than 5. Label encoding such categorical variables yielded meaningless information in model building and one-hot encoding blew up the dataset size to more than 3GB!</li>

<li>Did label encoding for categorical variables with factor levels less than or equal to 2 <code class="language-plaintext highlighter-rouge">(term, pymnt_plan</code>, <code class="language-plaintext highlighter-rouge">initial_list_status</code>, <code class="language-plaintext highlighter-rouge">application_type</code>, <code class="language-plaintext highlighter-rouge">hardship_flag</code>, <code class="language-plaintext highlighter-rouge">debt_settlement_flag</code>, <code class="language-plaintext highlighter-rouge">target)</code>.</li>

<li>Did one-hot encoding for rest of categorical variables with factor levels greater than 2. Dataset shape becomes <code class="language-plaintext highlighter-rouge">(2127, 6965)</code></li>

</ul>

</li>

</ul>

<h5 id="model-building">MODEL BUILDING</h5>

<ul>

<li>Null Hypothesis: From Fig-1, its apparent there is no relationship between the average annual income of applicants from WV and NM. To verify this claim further, a significance test is conducted using the <code class="language-plaintext highlighter-rouge">ttest_1samp()</code> function from the <code class="language-plaintext highlighter-rouge">scipy.stats</code> library.</li>

<li>Used label encoded data.</li>

<li>Performed a stratified random sampling to split the dataset into 80% train and 20% test parts (in code, see lines 124 to line 154).

<ul>

<li>Chose logistic regression algorithm</li>

</ul>

</li>

<li>Building a classification model on imbalanced dependent variable

<ul>

<li>F1 score for loan status with value Charged Off (0) is 90%</li>

<li>F1 score for loan status with value Fully Paid (1) is 98%</li>

</ul>

</li>

<li>Applied synthetic minority over sampling (SMOTE) method for data balancing

<ul>

<li>F1 score for loan status with value Charged Off (0) is 99%</li>

<li>F1 score for loan status with value Fully Paid (1) is 100%</li>

</ul>

</li>

</ul>

<p>Model Summary statistics as follows;</p>

<h4 id="imbalanced-data-classification">Imbalanced data classification</h4>

<div class="language-plaintext highlighter-rouge"><div class="highlight"><pre class="highlight"><code> precision recall f1-score support

0 1.00 0.74 0.85 68

1 0.95 1.00 0.98 358

accuracy 0.96 426

macro avg 0.98 0.87 0.91 426

weighted avg 0.96 0.96 0.96 426

Resampled data shape: (2856, 6975)

Balanced target

0 1428

1 1428

Name: target, dtype: int64

</code></pre></div></div>

<h4 id="balanced-data-using-smote">Balanced data using SMOTE</h4>

<div class="language-plaintext highlighter-rouge"><div class="highlight"><pre class="highlight"><code> precision recall f1-score support

0 0.98 0.90 0.94 68

1 0.98 1.00 0.99 358

accuracy 0.98 426

macro avg 0.98 0.95 0.96 426

weighted avg 0.98 0.98 0.98 426

</code></pre></div></div>

<h4 id="end-notes">End notes</h4>

<p>To develop a strategy for risk averse customers, the following points may be considered;</p>

<ul>

<li>We should target semi-urban or rural locations. Reason, such areas are replete with middle-economic class and/or lower economic class groups of people. In such sections of society, the penetration of information on Peer to Peer (P2P) lending is low. Our priority should be to educate such masses of people on the benefits and pitfalls of P2P lending as compared to other lending methods.</li>

<li>Next, such customers can be educated about the Mutual Fund (MF) investment options, in particular the debt MF growth option. This strategy may help to maintain low default rates because the debt MF expense ratio charged by MF companies are comparatively less as compared to equity MF expense ratios.</li>

</ul>

<![CDATA[Predicting the misclassification cost incurred in air pressure system failure in heavy vehicles]]>https://duttashi.github.io/blog/air-pressure-heavy-vehicle2020-03-01T00:00:00+00:002020-03-01T00:00:00+00:00Ashish Dutthttps://duttashi.github.ioashishdutt@yahoo.com.myblog<h3 id="abstract">Abstract</h3>

<p>The Air Pressure System (APS) is a type of function used in heavy vehicles to assist braking and gear changing. The APS failure dataset consists of the daily operational sensor data from failed Scania trucks. The dataset is crucial to the manufacturer as it allows to isolate components which caused the failure. However, missing values and imbalanced class problems are the two most challenging limitations of this dataset to predict the cause of the failure. The prediction results can be affected by the way of handling these missing values and imbalanced class problem. In this report, I have examined and presented the impact of three data balancing techniques, namely: under sampling, over sampling and Synthetic Minority Over Sampling Technique in producing significantly better results. I have also performed an empirical comparison of their performance by applying three different classifiers namely: Logistic Regression, Gradient Boosting Machines, and Linear Discriminant Analysis on this highly imbalanced dataset. The primary aim of this study is to observe the impact of the aforementioned data balancing techniques in the enhancement of the prediction results and performing an empirical comparison to determine the best classification model. I found that the logistic regression over-sampling technique is the highest influential method for improving the prediction performance and false negative rate.</p>

<h3 id="1-introduction">1. Introduction</h3>

<p>This data set is created by Scania CV AB Company to analyze APS failures and operational data for Scania Trucks. The dataset’s positive class consists of component failures for a specific component of the APS system. The negative class consists of trucks with failures for components not related to the APS.</p>

<h3 id="2-objective">2. Objective</h3>

<p>The objective of this report are two fold, namely;</p>

<p>a. To develop a Predictive Model (PM) to determine the class of failure</p>

<p>b. To determine the cost incurred by the company for misclassification.</p>

<h3 id="3-data-analysis">3. Data Analysis</h3>

<p>A systematic data analysis was undertaken to answer the objectives.</p>

<h4 id="a-data-source">A. Data source</h4>

<p>For this analysis, I have used the dataset hosted on <a href="https://archive.ics.uci.edu/ml/datasets/APS+Failure+at+Scania+Trucks">UCI ML Repository</a></p>

<h4 id="b-exploratory-data-analysis">B. Exploratory Data Analysis</h4>

<p>There were two sets of data, the training set and the test set.</p>

<h5 id="i-observations">i. Observations</h5>

<ul>

<li>The training set consisted of 60,000 observations in 171 variables and</li>

<li>The test set consist of 16,000 observations in 171 variables.</li>

<li>The missing values were coded as “na”</li>

<li>The training set had 850015 missing values</li>

<li>The test set had 228680 missing values</li>

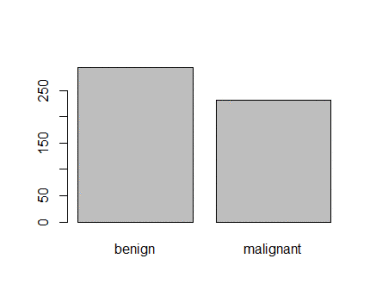

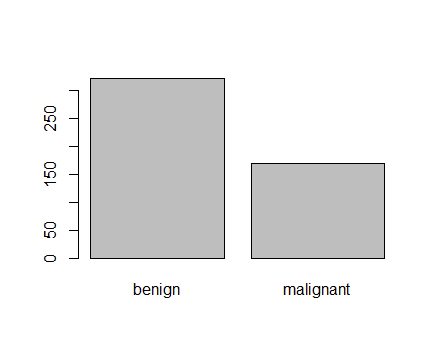

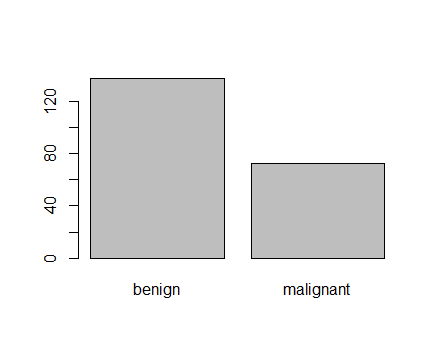

<li>The outcome or the dependent variable was highly skewed or imbalanced as shown in Figure 1</li>

</ul>

<p><img src="https://duttashi.github.io/images/CS_DSI_2_1.png" alt="plot1" /></p>

<p>Figure 1: Imbalanced class distribution</p>

<h5 id="ii-dimensionality-reduction-steps-for-training-data">ii. Dimensionality reduction steps for training data</h5>

<p>The training set contained 60,000 observations in 171 variables of which the dependent variable was binary in nature called, “class”. I had to find the variables that accounted for maximum variance. I took the following measures for dimensionality reduction:</p>

<p>a) Check for variables with more than 75% missing data</p>

<p>I found 6 independent variables that satisfied this property. I removed them from subsequent analysis. The count of independent variables decreased to 165.</p>

<p>b) Check for variables with more than 80% zero values</p>

<p>I found 33 independent variables that satisfied this property. I removed them from subsequent analysis. The count of independent variables decreased to 132.</p>

<p>c) Check for variables where standard deviation is zero</p>

<p>I found 1 independent variable that satisfied this property. I removed it from subsequent analysis. The count of independent variables decreased to 131.</p>

<p>d) Check for variables with near zero variance property</p>

<p>I found 10 independent variables that satisfied this property. I removed them from subsequent analysis. The count of independent variables decreased to 121.</p>

<p>e) Missing data detection and treatment</p>

<p>Since all independent variables were continuous in nature, I used median to impute the missing values in them. In Figure 2, I’ve shown the missing data pattern visualization.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_2_2.png" alt="plot2" /></p>

<p>Figure 2: Missing data visualization for training dataset</p>

<p>In Figure 2, the black colored histogram actually shows the missing data pattern. As the number of independent variables was huge, not all of them are shown and hence the color is black.</p>

<p>f) Correlation detection and treatment</p>

<p>I found several continuous variables to be highly correlated. I applied an unsupervised approach, the Principal Component Analysis (PCA) to extract non-correlated variables. PCA also helps in dimensionality reduction and provides variables with maximum variance. In Figure 3, I have shown the important principal components.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_2_3.png" alt="plot3" /></p>

<p>Figure 3: Important principal components for training dataset</p>

<h4 id="c-predictive-modeling">C. Predictive modeling</h4>

<p>As noted above (see sub-section B-i), this dataset was severely imbalanced. If left untreated, the predictions will be incorrect. I will now show the predictions on the original imbalanced dataset followed by the predictions on the balanced dataset. Thereafter, I’ve provided a discussion on the same.</p>

<h5 id="i-assumption">i. Assumption</h5>

<p>In this analysis, my focus is on correctly predicting the positive class, i.e., the trucks with component failures for a specific component of the APS system.</p>

<h5 id="ii-data-splitting">ii. Data splitting</h5>

<p>I created a control function based on 3-fold cross validation. Then I split the training set into 70% training and 30% test set. The training dataset contained 42,000 observations in 51 variables. The test set contained 18,000 observations in 51 variables.</p>

<h5 id="iii-justification-on-classifier-metric-choice">iii. Justification on classifier metric choice</h5>

<p>Note, I chose Precision Recall Area Under Curve (PR AUC) as a classification metric over Receiver Operating Curve Area Under Curve (ROC AUC).</p>

<p>The key difference is that ROC AUC will be the same no matter what the baseline probability is, but PR AUC may be more useful in practice for needle-in-haystack type problems or problems where the “positive” class is more interesting than the negative class. And this is my fundamental justification to why I chose PR AUC over ROC AUC, because I’m interested in predicting the positive class. This also answers the challenge metric on reducing the type 1 and type II errors.</p>

<h5 id="iv-predictive-modeling-on-imbalanced-training-dataset">iv. Predictive modeling on imbalanced training dataset</h5>

<p>I chose 3 classifiers namely logistic regression (logreg), linear discriminant analysis (lda) and gradient boosting machine (gbm) algorithms for prediction comparative analysis. I also chose three sampling techniques for data balancing, namely, under sampling, over sampling and synthetic minority over sampling technique (SMOTE). The logistic regression model gave the highest sensitivity.</p>

<p>And in Figure 4, I’ve shown the dot plot which depicts the PR-AUC scores visualization on the imbalanced dataset.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_2_4.png" alt="plot4" /></p>

<p>Figure 4: Dot plot on imbalanced training dataset</p>

<h5 id="v-challenge-metric-computation-on-imbalanced-training-dataset">v. Challenge metric computation on imbalanced training dataset</h5>

<p>Challenge metric is the cost metric of misclassification. Where cost 1 = 10 and cost 2 = 500</p>

<p>Total cost = 10 * CM.FP + 500 * CM.FN</p>

<p>Total cost = 10<em>55+500</em>149 = $75, 050</p>

<p>The company will incur $75, 050 in misclassification cost on the imbalanced dataset.</p>

<h5 id="vi-predictive-modelling-on-balanced-training-dataset">vi. Predictive modelling on balanced training dataset</h5>

<p>For data balancing, I chose 3 different methods, namely under-sampling, over-sampling and Synthetic Minority Over Sampling Technique (SMOTE). I found the over sampling technique to be most effective for logistic regression model. So I applied this technique on the balanced training dataset</p>

<p>I’ll now show the predictive modelling on the balanced training dataset. As shown earlier, I split the dataset into 70-30 ratio and applied a 3-fold cross validation. Then, I applied the logistic regression algorithm by up-sampling, down-sampling and synthetic minority over sampling methods shown in Figure 5.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_2_5.png" alt="plot5" /></p>

<p>Figure 5: Dot plot on balanced training dataset</p>

<h5 id="vii-challenge-metric-computation-on-balanced-training-dataset">vii. Challenge metric computation on balanced training dataset</h5>

<p>Challenge metric is the cost metric of misclassification. Where cost 1 = 10 and cost 2 = 500</p>

<p>Over sampling based logistic regression</p>

<p>Total cost = 10 * CM.FP + 500 * CM.FN</p>

<p>Total cost = 10<em>540+500</em>33 = $21,900</p>

<p>The benefit of data balancing is evident. By extracting the independent variables with variance and balancing, I was able to reduce the misclassification cost from the initial $75,050 to $21,900 on the balanced training dataset.</p>

<h5 id="viii-challenge-metric-computation-on-balanced-test-dataset">viii. Challenge metric computation on balanced test dataset</h5>

<p>Next, I’ll apply the logistic regression over sampled method to the clean test dataset.</p>

<p>Challenge metric is the cost metric of misclassification. Where cost 1 = 10 and cost 2 = 500</p>

<p>Over sampling based logistic regression on test data</p>

<p>Total cost = 10 * CM.FP + 500 * CM.FN</p>

<p>Total cost = 10<em>359+500</em>8 = $7,590</p>

<p>The predicted misclassification cost is found to be $7,590.</p>

<h4 id="discussion">Discussion</h4>

<p>Oversampling and under sampling can be used to alter the class distribution of the training data and both methods have been used to deal with class imbalance. The reason that altering the class distribution of the training data aids learning with highly-skewed data sets is that it effectively imposes non-uniform misclassification costs. There are known disadvantages associated with the use of sampling to implement cost-sensitive learning. The disadvantage with under sampling is that it discards potentially useful data. The main disadvantage with oversampling, from my perspective, is that by making exact copies of existing examples, it makes over fitting likely.

Traditionally, the most frequently used metrics are accuracy and error rate. Considering a basic two-class classification problem, let {p,n} be the true positive and negative class label and {Y,N} be the predicted positive and negative class labels. Then, a representation of classification performance can be formulated by a confusion matrix (contingency table), as illustrated in Table 3. These metrics provide a simple way of describing a classifier’s performance on a given data set. However, they can be deceiving in certain situations and are highly sensitive to changes in data. In the simplest situation, if a given data set includes 5 percent of minority class examples and 95 percent of majority examples, a naive approach of classifying every example to be a majority class example would provide an accuracy of 95 percent. Taken at face value, 95 percent accuracy across the entire data set appears superb; however, on the same token, this description fails to reflect the fact that 0 percent of minority examples are identified. That is to say, the accuracy metric in this case does not provide adequate information on a classifier’s functionality with respect to the type of classification required.

Although ROC curves provide powerful methods to visualize performance evaluation, they also have their own limitations. In the case of highly skewed data sets, it is observed that the ROC curve may provide an overly optimistic view of an algorithm’s performance. Under such situations, the PR curves can provide a more informative representation of performance assessment. To see why the PR curve can provide more informative representations of performance assessment under highly imbalanced data, let’s consider a distribution where negative examples significantly exceed the number of positive examples (i.e. N_c>P_c). In this case, if a classifier performance has a large change in the number of false positives, it will not significantly change the FP rate since the denominator N_c is very large. Hence, the ROC graph will fail to capture this phenomenon. The precision metric, on the other hand considers the ratio of TP with respect to TP+FP; hence it can correctly capture the classifiers performance when the number of false positives drastically change. Hence, as evident by this example the PR AUC is an advantageous technique for performance assessment in the presence of highly skewed data. Another shortcoming of ROC curves is that they lack the ability to provide confidence intervals on a classifier’s performance and are unable to infer the statistical significance of different classifiers’ performance. They also have difficulties providing insights on a classifier’s performance over varying class probabilities or misclassification costs. In order to provide a more comprehensive evaluation metric to address these issues, cost curves or PR AUC is suggested.</p>

<h5 id="conclusion">Conclusion</h5>

<p>In this dataset, there were several problems notably the major one was the class imbalance issue, which was followed by missing values and other issues that I’ve highlighted in sub-section 3iii. However, the challenge was not the class imbalance issue per se but the choice of an appropriate metric that could correctly answer the assumption I had formulated in sub-section Ci. The tradeoff between PR AUC and AUC is discussed in sub-section 3iii. Furthermore, I was able to reduce the misclassification cost to <strong>$7,590</strong> by over sampling the data.</p>

<h4 id="appendix-a">Appendix A</h4>

<h5 id="explanation-of-statistical-terms-used-in-this-study">Explanation of statistical terms used in this study</h5>

<ul>

<li>Variable: is any characteristic, number or quantity that is measurable. Example, age, sex, income are variables.</li>

<li>Continuous variable: is a numeric or a quantitative variable. Observations can take any value between a set of real numbers. Example, age, time, distance.</li>

<li>Independent variable: also known as the predictor variable. It is a variable that is being manipulated in an experiment in order to observe an effect on the dependent variable. Generally in an experiment, the independent variable is the “cause”.</li>

<li>Dependent variable: also known as the response or outcome variable. It is the variable that is needs to be measured and is affected by the manipulation of independent variables. Generally, in an experiment it is the “effect”.</li>

<li>Variance: explains the distribution of data, i.e. how far a set of random numbers are spread out from their original values.</li>

<li>Regression analysis: It is a set of statistical methods used for the estimation of relationships between a dependent variable and one or more independent variables. It can be utilized to assess the strength of the relationship between variables and for modeling the future relationship between them.</li>

</ul>

<h4 id="appendix-b">Appendix B</h4>

<p>The R code for this study can be downloaded from <a href="https://github.com/duttashi/duttashi.github.io/blob/master/scripts/CaseStudy-air_pressure_system_failure.R">here</a></p>

<![CDATA[A classification approach to predicting air crash survival]]>https://duttashi.github.io/blog/aircraft-crash-survival2020-02-21T00:00:00+00:002020-02-21T00:00:00+00:00Ashish Dutthttps://duttashi.github.ioashishdutt@yahoo.com.myblog<h3 id="introduction">Introduction</h3>

<p>Historically there have been several instance of air plane crashes. This study is an attempt to explore the possible causes of such air crashes, and to determine if air travel is a safe option.</p>

<h3 id="objective">Objective</h3>

<p>The objective of this study are two fold, namely;</p>

<p>a. To perform an Exploratory Data Analysis (EDA) to determine the common cause/reason of airplane crash, countries with maximum/minimum airplane crashes, fatalities vs survived ratio and any other interesting trend.</p>

<p>b. To develop a Predictive Model (PM) to determine the following;</p>

<ol>

<li>Is traveling by air a safe option?</li>

<li>In particular analyze the historical data to determine the accuracy of air crash survival.</li>

</ol>

<h3 id="data-analysis">Data Analysis</h3>

<p>A systematic data analysis was undertaken to answer the objectives.</p>

<h4 id="a-data-source">A. Data source</h4>

<ul>

<li>For this analysis, I have used two data sources. The primary data source was Kaggle and the secondary source was <code class="language-plaintext highlighter-rouge">www.planecrashinfo.com</code></li>

<li>The dataset hosted on Kaggle was from 1908 till 2009.</li>

<li>The secondary data source was required because I needed plane crash data from 2010 until 2020. This would help in both EDA and PM.</li>

<li>So for this analysis, I wrote a custom scrapper to extract the air crash data from <code class="language-plaintext highlighter-rouge">www.planecrashinfo.com</code></li>

</ul>

<h4 id="b-exploratory-data-analysis">B. Exploratory Data Analysis</h4>

<p>Both datasets were dirty. Several data management tasks were carried out to clean the data. As per a researcher Wickham, H. (2014), tidy data is a dataset where each variable is a column and each observation (or case) is a row.</p>

<h5 id="1-data-management-decisions">1. Data management decisions</h5>

<ul>

<li>The Kaggle dataset consisted of 5,268 observations in 13 variables. It had 10,198 missing values</li>

<li>The external dataset consisted of 237 observation in 13 variables.</li>

<li>The missing values in external dataset were coded as “?”. These were re-coded to NA. There were 222 missing values.</li>

<li>The Kaggle dataset and the external data were then merged into a composite dataframe, hereafter referred to as <code class="language-plaintext highlighter-rouge">df</code>.</li>

<li>The <code class="language-plaintext highlighter-rouge">df</code> consisted of 5,505 observations in 13 variables.</li>

<li>The range of aircraft crash years was from 1908 till 2020.</li>

</ul>

<h5 id="ii-feature-engineering">ii. Feature engineering</h5>

<p>The variable summary contained free form text related to plane crash details. It contained important information. But it needed cleaning. So I created some derived variables like <code class="language-plaintext highlighter-rouge">crash_reason</code>, <code class="language-plaintext highlighter-rouge">crash_date, crash_month, crash_year</code>, <code class="language-plaintext highlighter-rouge">crash_hour, crash_minute, crash_second</code>, <code class="language-plaintext highlighter-rouge">crash_area, crash_country</code>, <code class="language-plaintext highlighter-rouge">crash_route_start, crash_route_mid, crash_route_end</code>, <code class="language-plaintext highlighter-rouge">crash_operator_type</code>, <code class="language-plaintext highlighter-rouge">survived</code>, <code class="language-plaintext highlighter-rouge">alive_dead_ratio</code>.</p>

<h4 id="c-data-visualization">C. Data Visualization</h4>

<p>As the common adage goes, “a picture is worth a thousand words”. Once the data was cleaned and composed in a tidy format, it was ready for visualizations. Data visualization helps in determining possible relationship between variables. In Fig-1 & Fig-2, I show the common reasons for air crash sorted by descriptions and words. In particular, air crash during take offs are maximum, see Fig-1.</p>

<h5 id="i-visualizing-the-common-reasons-attributed-to-air-plane-crash">i. Visualizing the common reasons attributed to air plane crash</h5>

<p><img src="https://duttashi.github.io/images/CS_DSI_1.png" alt="plot1" /></p>

<p>Fig-1: Common air crash descriptions</p>

<h5 id="ii-visualizing-the-common-words-used-for-air-plane-crash">ii. Visualizing the common words used for air plane crash</h5>

<p><img src="https://duttashi.github.io/images/CS_DSI_2.png" alt="plot2" /></p>

<p>Fig-2: Common air crash words</p>

<h5 id="iii-visualizing-the-crashed-flight-operators">iii. Visualizing the crashed flight operators</h5>

<p>A majority of the flight operators are US-military, AirForce, Aeroflot, Air France and Luftansa, as seen from Fig-3.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_3.png" alt="plot3" /></p>

<p>Fig-3: Air crash flight operators</p>

<p>The peak of air crash survivors was in year 2000, see Fig-4. Probably the reason could be because of better aircraft’s compared to yesteryear’s.</p>

<h5 id="iv-visualizing-the-air-crash-survivors">iv. Visualizing the air crash survivors</h5>

<p><img src="https://duttashi.github.io/images/CS_DSI_4.png" alt="plot4" /></p>

<p>Fig-4: Air crash survivors by year</p>

<p>It was found that there were more civilian air crashes as compared to military crashes. Moreover, 3,198 fatalities are observed in air crashes since 1908, including both civilian and military air crashes. So, I took a subset of the civilian air crashes data and plotted it. I present them below in the form of some hypothesis.</p>

<h5 id="v-visualizing-the-civilian-air-crash-fatalities">v. Visualizing the civilian air crash fatalities</h5>

<p><img src="https://duttashi.github.io/images/CS_DSI_5.png" alt="plot5" /></p>

<p>Fig-5: Civilian air crash survivors by year</p>

<ul>

<li>

<p>The peak of air crashes lay between the years 1970-1980s.</p>

</li>

<li>

<p>Off these 58 aircraft’s crashed in Alaska, followed by 45 in Russia, 32 and 30 in Colombia and California respectively.</p>

</li>

<li>

<p>I then filtered data for crash year after year 2010 and found that Russia recorded maximum civilian fatalities in year 2011 (dead=5), followed by Indonesia in year 2015 (dead=4) and Russia in year 2012 (dead=4). See Fig-5.</p>

</li>

</ul>

<h5 id="vi-is-there-a-relationship-between-civilian-air-crash-year-and-crash-reason">vi. Is there a relationship between civilian air crash year and crash reason</h5>

<p>I plotted this relationship and found the following:</p>

<ul>

<li>There were 4,692 civilian air crashes since 1908 and 813 military induced air crashes. See Fig-6.</li>

<li>Off these 4,692 civilian air crashes, 644 occurred after year 2000.</li>

<li>Off the 644 civil air crashes, 301 were technical failures, 86 by natural cause, 52 crashed in mountains and 7 were shot down by military. There are 198 uncategorized crashes.</li>

<li>The civilian aircrafts shot down by military crashed in countries like Congo (year 2003), Iran (year 2020), Laos (year 2014), Kedarnath, India (year 2013), Rapua (year 2016), Russia (year 2001) and Zabul province (year 2010).</li>

<li>Majority of civil air crashes were due to technical failure. At least 4 aircrafts crashed in Russia in 2011 because of technical failure. This was followed by Sudan, where 3 planes were lost in 2008 because of technical failure. Since the year 2010, there were 20 civilian aircraft crashes for Russia, 10 for Nepal, followed by Congo and Indonesia at 9 each.</li>

<li>The median for military action related air crash was around year 1951</li>

<li>The median for mountain and natural caused crashes was around year 1976</li>

<li>The median for technical failure related crashes was around 1977.</li>

</ul>

<p><img src="https://duttashi.github.io/images/CS_DSI_6.png" alt="plot6" /></p>

<p>Fig-6: Reasons for civilian air crashes sorted by year</p>

<h5 id="vii-is-there-a-relationship-between-civilian-air-crash-month-and-crash-reason">vii. Is there a relationship between civilian air crash month and crash reason</h5>

<p>I plotted this relationship and found the following:</p>

<ul>

<li>A majority of air crashes took place around the month of July. These crashes were related to mountain, natural, miscellaneous and natural reasons. See Fig-7.</li>

<li>Russia tops this list with 7 air crafts crashing in July month because of technical failure. Off these 7 air crafts, 4 were of Antonov An series.</li>

</ul>

<p><img src="https://duttashi.github.io/images/CS_DSI_7.png" alt="plot7" /></p>

<p>Fig-7: Reasons for civilian air crashes sorted by month</p>

<h5 id="viii-is-there-a-relationship-between-civilian-air-crash-fatalities-and-crash-reason">viii. Is there a relationship between civilian air crash fatalities and crash reason</h5>

<p>Although the median for civilian air crash fatalities normally centered around 1-5 people, but there were several outlier values too. For instance in one military action induced civil aircraft crash took the life of all 290 people aboard. This incident occurred in 1988 at 10:55pm over the Persian Gulf, near Bandar Abbas in Iran. The Airbus A300B2-203 bearing registration number EPIBU was shot down by an US Navy vessel USS Vincennes by a SAM (surface to air) missile. See Fig-8.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_8.png" alt="plot8" /></p>

<p>Fig-8: Reasons for civilian air crashes sorted by fatalities</p>

<h4 id="d-data-sub-setting">D. Data sub setting</h4>

<p>Looking at the data distribution, I found maximum observation were related to civilian aircraft crashes (n=4692) while the observations for military aircraft crashes were less (n=813). Furthermore, I subset the civilian air craft crashes since the year 2010. The reasoning is, to answer the first objective, “is travelling by air a safe option”, I needed to analyze the data for the last one decade. The data dimension for civilian air craft crash since year 2010 was 205 observations in 24 variables (includes both original & derived variables).</p>

<h4 id="e-detecting-near-zero-variance-nzv">E. Detecting Near Zero Variance (NZV)</h4>

<p>NZV is a property wherein a given variable has almost zero trend, i.e. all its values are identical. I found two such variables in civilian aircraft crashes. They were, “ground” & “crash operator type”. I removed them from further analysis. I also removed the summary variable. At this stage, the data dimension for civilian air craft crash since year 2010, was 205 observations in 21 variables (includes both original & derived variables)</p>

<h4 id="f-missing-data-analysis">F. Missing data analysis</h4>

<p>There are two types of missing data:</p>

<ol>

<li>

<p>Missing Completely At Random (MCAR): is a desirable scenario</p>

</li>

<li>

<p>Missing Not At Random: is a serious issue and it would be best to check the data gathering process.</p>

</li>

</ol>

<p>For this analysis, I’m assuming the data is MCAR. Usually a safe minimal threshold is 5% of the total for a dataset. For a given variable, if the data is missing for more than 5% then it’s safe to leave that variable out of analysis. Basis of this assumption, I found the following variables, <code class="language-plaintext highlighter-rouge">Crash_hour, Crash_minute, Flight, Crash_route_start, Crash_route_mid, Crash_route_end, Fuselage_number</code>, with more than 5% missing data.</p>

<p>It should be noted that for civilian aircraft crashes since 1908, in all there were 16051 observations with missing data. Furthermore, for civilian aircraft crashes since 2010, there were 370 missing values. Since the sample size was small (n=205), I imputed the missing values as Zero.</p>

<h4 id="g-correlation-detection">G. Correlation detection</h4>

<p>In building a predictive model, it’s always advisable to account for correlation. It is a statistical term that measures the degree of linear dependency between variables. So variables that are highly correlated to each other are deemed to be non-contributors to a given predictive model. In Fig 9, I show the correlation plot for continuous variables. For instance, the variable aboard and fatalities have a strong negative correlation.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_9.png" alt="plot9" /></p>

<p>Fig-9: Correlation detection for continuous variables</p>

<h5 id="i-correlation-treatment">i. Correlation treatment</h5>

<p>To treat the correlation, I have applied an unsupervised dimensionality reduction and feature selection approach called the Principal Component Analysis (PCA) for continuous variables, and the Multiple Correspondence Analysis (MCA) for the categorical variables.

In Fig-10, I have shown relevant principal components (PCs). Notice the red horizontal line in Fig 10 (B). This red line indicates the cut-off point. Therefore the continuous variables namely, “aboard, fatalities, crash minute, crash month, crash date, crash year” are deemed relevant for further analysis.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_10.png" alt="plot10" /></p>

<p>Fig-10: Principal Component Analysis for dimensionality reduction & feature selection</p>

<p>Next, In Fig-11, I have shown the MCA for categorical variables. Notice the red horizontal line in Fig-11 (B). This red line indicates the cut-off point. As we can see from this plot that none off the categorical variables are deemed relevant for further analysis.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_11.png" alt="plot11" /></p>

<p>Fig-11: Multiple Correspondence Analysis for dimensionality reduction & feature selection</p>

<p>By this stage, the data dimension for air craft crashes since 2010 was reduced to 205 observation in 7 variables.</p>

<h4 id="h-predictive-analytics">H. Predictive analytics</h4>

<p>The derived variable <code class="language-plaintext highlighter-rouge">survived</code> was continuous in nature. For a classification task, I coerced it into categorical with two levels. If there were 0 survivors, then I coded it as “dead” and if there were more than 1 survivor, it was coded as <code class="language-plaintext highlighter-rouge">alive</code> and saved it as a variable called <code class="language-plaintext highlighter-rouge">crash survivor</code>.</p>

<p>I found that in 205 complete clean observations, the proportion of dead was 63% and that of alive was 37%. This indicated that the outcome/dependent variable <code class="language-plaintext highlighter-rouge">crash survivor</code> was imbalanced. If this anomaly is left untreated, then any model based on this variable will give erroneous results. An imbalanced dataset refers to the disparity encountered in the dependent (response) variable.</p>

<p>Therefore, an imbalanced classification problem is one in which the dependent variable has imbalanced proportion of classes. In other words, a data set that exhibits an unequal distribution between its classes is considered to be imbalanced. I split the clean dataset into a 70/30 % split by 10-fold cross validation. The training set contained 145 observations in 7 variables. The test set contained 60 observations in 7 variables. The 7 independent variables are, <code class="language-plaintext highlighter-rouge">crash year, crash month, crash date, crash minute, aboard, fatalities and crash survivor</code>.</p>

<h5 id="i-methods-to-deal-with-imbalanced-classification">i. Methods to deal with imbalanced classification</h5>

<ol>

<li>Under Sampling</li>

</ol>

<p>With under-sampling, we randomly select a subset of samples from the class with more instances to match the number of samples coming from each class. The main disadvantage of under-sampling is that we lose potentially relevant information from the left-out samples.</p>

<ol>

<li>Over Sampling</li>

</ol>

<p>With oversampling, we randomly duplicate samples from the class with fewer instances or we generate additional instances based on the data that we have, so as to match the number of samples in each class. While we avoid losing information with this approach, we also run the risk of over fitting our model as we are more likely to get the same samples in the training and in the test data, i.e. the test data is no longer independent from training data. This would lead to an overestimation of our model’s performance and generalization.</p>

<ol>

<li>ROSE and SMOTE</li>

</ol>

<p>Besides over- and under-sampling, there are hybrid methods that combine under-sampling with the generation of additional data. Two of the most popular are ROSE and SMOTE.</p>

<p>The ideal solution is, we should not simply perform over- or under-sampling on our training data and then run the model. We need to account for cross-validation and perform over or under-sampling on each fold independently to get an honest estimate of model performance.</p>

<h5 id="ii-prediction-on-imbalanced-dataset">ii. Prediction on imbalanced dataset</h5>

<p>To test the accuracy of air crash survivors, I applied three classification algorithms namely Classification and Regression Trees (CART), K-Nearest Neighbors (KNN) and Logistic Regression (GLM) to the clean imbalanced dataset. The CART and GLM model give 100% accuracy. See Fig-12.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_12.png" alt="plot12" /></p>

<p>Fig-12: Accuracy plot of predictive models on imbalanced data</p>

<p>I have shown below the predictive modelling results on imbalanced dataset.</p>

<div class="language-plaintext highlighter-rouge"><div class="highlight"><pre class="highlight"><code>Call:

summary.resamples(object = models)

Models: cart, knn, glm

Number of resamples: 100

ROC

Min. 1st Qu.Median Mean 3rd Qu. Max. NA's

cart 0.4907407 0.7666667 0.8605556 0.8390667 0.933796310

knn 0.3444444 0.6472222 0.7527778 0.7460315 0.845833310

glm 0.9000000 1.0000000 1.0000000 0.9977593 1.000000010

Sens

Min. 1st Qu. Median Mean 3rd Qu. Max. NA's

cart 0.1666667 0.60.8 0.7310000 0.833333310

knn 0.0000000 0.40.5 0.5406667 0.666666710

glm 0.6000000 1.01.0 0.9723333 1.000000010

Spec

Min. 1st Qu.Median Mean 3rd Qu. Max. NA's

cart 0.6666667 0.8000000 0.8888889 0.8846667 1.000000010

knn 0.5555556 0.7777778 0.8888889 0.8461111 0.888888910

glm 0.8888889 1.0000000 1.0000000 0.9801111 1.000000010

Confusion Matrix and Statistics

Reference

Prediction alive dead

alive220

dead 0 38

Accuracy : 1

95% CI : (0.9404, 1)

No Information Rate : 0.6333

P-Value [Acc NIR] : 1.253e-12

Kappa : 1

Mcnemar's Test P-Value : NA

Sensitivity : 1.0000

Specificity : 1.0000

Pos Pred Value : 1.0000

Neg Pred Value : 1.0000

Prevalence : 0.3667

Detection Rate : 0.3667

Detection Prevalence : 0.3667

Balanced Accuracy : 1.0000

'Positive' Class : alive

</code></pre></div></div>

<p>From the result above, its evident the sensitivity of CART and GLM model is maximum.</p>

<h5 id="iii-prediction-on-balanced-dataset">iii. Prediction on balanced dataset</h5>

<p>I balanced the dataset by applying under, over sampling method as well as the ROSE method. From the results shown in above, I picked the logistic regression model to train on the balanced data. As we can see now, the sensitivity for over and under-sampling is maximum when applied the logistic regression algorithm. So I chose, under sampling for testing the model. See Fig-13 and the confusion matrix results are shown below.</p>

<p><img src="https://duttashi.github.io/images/CS_DSI_13.png" alt="plot13" /></p>

<p>Fig-13: Accuracy plot of predictive models on balanced data</p>

<p>After balancing the data and reapplying a logistic regression algorithm, the accuracy to predict the air crash survivor accuracy reduced to 98%, as shown in confusion matrix below.</p>

<div class="language-plaintext highlighter-rouge"><div class="highlight"><pre class="highlight"><code>Call:

summary.resamples(object = models)

Models: glm_under, glm_over, glm_rose

Number of resamples: 100

ROC

Min. 1st Qu.Median Mean 3rd Qu. Max.

glm_under 0.8240741 1.0000000 1.0000000 0.9920185 1.0000000 1.0000000

glm_over 0.9000000 1.0000000 1.0000000 0.9977593 1.0000000 1.0000000

glm_rose 0.0000000 0.1638889 0.2722222 0.2787333 0.3555556 0.7777778

NA's

glm_under0

glm_over 0

glm_rose 0

Sens

Min. 1st Qu.Median Mean 3rd Qu. Max. NA's

glm_under 0.6 1.0 1.0000000 0.9746667 1.010

glm_over 0.6 1.0 1.0000000 0.9723333 1.010

glm_rose 0.0 0.2 0.3666667 0.3533333 0.510

Spec

Min. 1st Qu.Median Mean 3rd Qu. Max.

glm_under 0.7777778 1.0000000 1.0000000 0.9745556 1.0000000 1.0000000

glm_over 0.8888889 1.0000000 1.0000000 0.9801111 1.0000000 1.0000000

glm_rose 0.0000000 0.2222222 0.3333333 0.3538889 0.4444444 0.8888889

NA's

glm_under0

glm_over 0

glm_rose 0

Confusion Matrix and Statistics

Reference

Prediction alive dead

alive221

dead 0 37

Accuracy : 0.9833

95% CI : (0.9106, 0.9996)

No Information Rate : 0.6333

P-Value [Acc > NIR] : 4.478e-11

Kappa : 0.9645

Mcnemar's Test P-Value : 1

Sensitivity : 1.0000

Specificity : 0.9737

Pos Pred Value : 0.9565

Neg Pred Value : 1.0000

Prevalence : 0.3667

Detection Rate : 0.3667

Detection Prevalence : 0.3833

Balanced Accuracy : 0.9868

'Positive' Class : alive

</code></pre></div></div>

<h5 id="iv-results-interpretation">iv. Results interpretation</h5>

<p>In answering the second objective of this analysis, it’s been found that the logistic regression model gives 98% accuracy in determining the accuracy of an air crash survival. This explains the need for balancing the dataset before modeling.</p>

<h4 id="i-limitations">I. Limitations</h4>

<p>Perhaps, one of the challenges on working on this dataset was the higher number of categorical variables. And each such variable having more than 10 distinct levels. Decomposing them into a smaller number of meaningful levels would require help from a subject matter expert. Besides this, the dataset contained a huge number of missing values in categorical variables. Imputing them would be bottleneck to the primary memory. I replaced the missing values with Zero.</p>

<h4 id="j-discussion">J. Discussion</h4>

<p>There can be an argument on the necessity of data balancing. For instance, in this analysis I have shown that imbalanced data give 100% accuracy, in contrast the balanced data accuracy reduces to 98%. The reasoning here is, balanced or imbalanced data is dependent on distribution of data points. By balancing the data, the analyst is absolutely certain about the robustness of the model, which would not be possible with an imbalanced dataset.</p>

<p>Traveling by air is certainly a safe option in present times. I have proved this claim by conducting a systematic rigorous data analysis. Moreover, the logistic regression model trained on balanced under-sampled data yield the maximum sensitivity.</p>

<h4 id="k-conclusion-and-future-work">K. Conclusion and Future Work</h4>

<p>In this study, I have analyzed the last 101 years data on air craft crashes. I have shown in my detailed analysis that given certain factors like <code class="language-plaintext highlighter-rouge">crash year, crash month, crash date, crash minute, aboard, fatalities and survived</code>, it’s possible to predict the accuracy of air crash survivors. I have tested several hypothesis in this work, see section C. It would be interesting to see trends between aircraft type and air crash fatalities which I leave as a future work.</p>

<h4 id="reference">Reference</h4>

<p>Wickham, H. (2014). Tidy data. Journal of Statistical Software, 59(10), 1-23.</p>

<h4 id="appendix-a">Appendix A</h4>

<h5 id="explanation-of-statistical-terms-used-in-this-study">Explanation of statistical terms used in this study</h5>

<ul>

<li>Variable: is any characteristic, number or quantity that is measurable. Example, age, sex, income are variables.</li>

<li>Continuous variable: is a numeric or a quantitative variable. Observations can take any value between a set of real numbers. Example, age, time, distance.</li>

<li>Categorical variable: describes quality or characteristic of a data unit. Typically it contains text values. They are qualitative variables.</li>

<li>Categorical-nominal: is a categorical variable where the observation can take a value that cannot be organized into a logical sequence. Example, religion, product brand.</li>

<li>Independent variable: also known as the predictor variable. It is a variable that is being manipulated in an experiment in order to observe an effect on the dependent variable. Generally in an experiment, the independent variable is the “cause”.</li>

<li>Dependent variable: also known as the response or outcome variable. It is the variable that is needs to be measured and is affected by the manipulation of independent variables. Generally, in an experiment it is the “effect”.</li>

<li>Variance: explains the distribution of data, i.e. how far a set of random numbers are spread out from their original values.</li>

<li>Sensitivity: is the ability of a test to correctly identify, the occurrence of a value in the dependent or the response variable. Also known as the true positive rate.</li>

<li>Specificity: is the ability of a test to correctly identify, the non-occurrence of a value in the dependent or the response variable. Also known as the true negative rate.</li>

<li>Cohen’s Kappa: is a statistic to measure the inter-rate reliability of a categorical variable. It ranges from -1 to +1.</li>

</ul>

<h4 id="appendix-b">Appendix B</h4>

<p>The R code for this study can be downloaded from <a href="https://duttashi.github.io/scripts/CaseStudy-aircrash_survival.R">here</a></p>

<![CDATA[Employee flight risk modeling behavior]]>https://duttashi.github.io/blog/employee-flight-risk-prediction-behaviour2019-05-29T00:00:00+00:002019-05-29T00:00:00+00:00Ashish Dutthttps://duttashi.github.ioashishdutt@yahoo.com.myblog<h3 id="an-analytical-model-for-predicting-employee-flight-risk-behaviour">An analytical model for predicting employee flight risk behaviour</h3>

<p>“People are the nucleus of any organization. So, how can you find, engage and retain top performers who’ll contribute to your goals, your future?”</p>

<p>There is no dearth of Enterprise Resource Planning (ERP) systems utilized by human resource companies, however, the inclusion of machine learning to such ERP systems can be very useful. This leads one to ask the following question.</p>

<h5 id="a-question">A. Question</h5>

<p>To develop a predictive model to understand the reasons why employees leave the organization.</p>

<h5 id="b-objectives">B. Objectives</h5>

<p>This report has two objectives, namely;</p>

<p>i. To conduct an exploratory data analysis for determining any possible relationship between the variables</p>

<p>ii. To develop a predictive model for identifying the potential employee attrition reasons.</p>

<h5 id="c-data-analysis">C. Data Analysis</h5>

<p>A systematic data analysis was undertaken to answer the business question and objective.</p>

<p>i. <strong>Exploratory Data Analysis (EDA)</strong></p>

<p>The training set had <code class="language-plaintext highlighter-rouge">13000</code> observations in <code class="language-plaintext highlighter-rouge">11</code> columns. The test set had <code class="language-plaintext highlighter-rouge">1999</code> observations in <code class="language-plaintext highlighter-rouge">10</code> columns. There were zero missing values. I now provide the following observations;</p>

<p><img src="https://duttashi.github.io/images/casestudy-hr-attrition-plt1.png" alt="plot1" /></p>

<p>Fig-1: Correlation plot</p>

<p>a. I renamed some variables like “sales” was renamed to “role”, “time_spend_company” was renamed to “exp_in_company”.</p>

<p>b. The employee attrition rate was 21.41%</p>

<p>c. The company had an employee attrition rate of 24%</p>

<p>d. The mean satisfaction of employees was 0.61</p>

<p>e. From the correlation plot shown in Fig-1, there is a positive (+) correlation between <code class="language-plaintext highlighter-rouge">projectCount</code>, <code class="language-plaintext highlighter-rouge">averageMonthlyHours</code>, and <code class="language-plaintext highlighter-rouge">evaluation</code>. Which could mean that the employees who spent more hours and did more projects were evaluated highly.</p>

<p>f. For the negative (-) relationships, <code class="language-plaintext highlighter-rouge">employee attrition</code> and <code class="language-plaintext highlighter-rouge">satisfaction</code> are highly correlated. Probably people tend to leave a company more when they are less satisfied.</p>

<p>g. A one-sample t-test was conducted to measure the satisfaction level.</p>

<ol>

<li>Hypothesis Testing: Is there significant difference in the means of satisfaction level between attrition and the entire employee population?</li>

</ol>

<p>1.1. <em>Null Hypothesis</em>: (<code class="language-plaintext highlighter-rouge">H0: pEmployeeLeft = pEmployeePop</code>) The null hypothesis would be that there is no difference in satisfaction level between attrition and the entire employee population.</p>

<p>1.2. <em>Alternate Hypothesis</em>: (<code class="language-plaintext highlighter-rouge">HA: pEmployeeLeft!= pEmployeePop</code>) The alternative hypothesis would be that there is a difference in satisfaction level between attrition and the entire employee population.</p>

<p><strong>Findings</strong></p>

<ul>

<li>The mean for the employee population is 0.618</li>

<li>The mean for attrition is 0.439</li>

</ul>

<p>I then conducted a t-test at 95% confidence level to see if it correctly rejects the null hypothesis that the sample comes from the same distribution as the employee population.</p>

<p><strong>Findings</strong></p>

<ul>

<li>I rejected the null hypothesis because the t-distribution left and right quartile ranges are -1.960. The T-score lies outside the quantiles and the p-value is lower than the confidence level of 5%.</li>

<li>The test result shows the test statistic “t” is equal to 0.36. This test statistic tells us how much the sample mean deviates from the null hypothesis. The alternative hypothesis is True as the mean is not equal to 0.61.</li>

</ul>

<p><strong>Inference</strong></p>

<p>From the above findings does not necessarily mean the findings are of practical significance because of two reasons, namely; collect more data or conduct more experiments.</p>

<p>h. Now let’s look at some distribution plots using some of the employee features like “Satisfaction”, “Evaluation” and “Average monthly hours”.</p>

<p><strong>Summary</strong>: Let’s examine the distribution on some of the employee’s features.</p>

<p>Here’s what I found:</p>

<ul>

<li><strong>Satisfaction</strong> There is a huge spike for employees with low satisfaction and high satisfaction.</li>

<li><strong>Evaluation</strong> There is a <code class="language-plaintext highlighter-rouge">bimodal</code> distribution of employees for low evaluations (less than 0.6) and high evaluations (more than 0.8)</li>

<li><strong>AverageMonthlyHours</strong> There is another bimodal distribution of employees with lower and higher average monthly hours (less than 150 hours & more than 250 hours)</li>

<li>The evaluation and average monthly hour graphs both share a similar distribution.</li>

<li>Employees with lower average monthly hours were evaluated less and vice versa.</li>

<li>If you look back at the correlation matrix, the high correlation between <code class="language-plaintext highlighter-rouge">evaluation</code> and <code class="language-plaintext highlighter-rouge">averageMonthlyHours</code> does support this finding.

Note: Employee attrition is coded as <code class="language-plaintext highlighter-rouge">1</code> and no attrition is coded as <code class="language-plaintext highlighter-rouge">0</code>.</li>

</ul>

<p>i. The relationship between <code class="language-plaintext highlighter-rouge">Salary</code> and <code class="language-plaintext highlighter-rouge">Attrition</code></p>

<ul>

<li>Majority of employees who left either had low or medium salary.</li>

<li>Barely any employees left with high salary</li>

<li>Employees with low to average salaries tend to leave the company.</li>

</ul>

<p><img src="https://duttashi.github.io/images/casestudy-hr-attrition-plt2.png" alt="plot2" /></p>

<p>Fig-2: Salary vs Attrition plot</p>

<p>j. The relationship between <code class="language-plaintext highlighter-rouge">Department</code> and <code class="language-plaintext highlighter-rouge">Attrition</code></p>

<ul>

<li>The <strong>sales</strong>, <strong>technical</strong>, and <strong>support</strong> department were the top 3 departments to have employee attrition.</li>

<li>The management department had the least count of attrition.</li>

</ul>

<p><img src="https://duttashi.github.io/images/casestudy-hr-attrition-plt3.png" alt="plot3" /></p>

<p>Fig-3: Department vs Attrition plot</p>

<p>k. The relationship between <code class="language-plaintext highlighter-rouge">Attrition</code> and <code class="language-plaintext highlighter-rouge">ProjectCount</code></p>

<ul>

<li>More than half of the employees with <strong>2,6, and 7</strong> projects left the company.</li>

<li>Majority of the employees who did not leave the company had <strong>3, 4, and 5</strong> projects.</li>

<li>All of the employees with 7 projects left the company.</li>

<li>There is an increase in employee attrition rate as project count increases.</li>

</ul>

<p><img src="https://duttashi.github.io/images/casestudy-hr-attrition-plt4.png" alt="plot4" /></p>

<p>Fig-4: Project count vs Attrition plot</p>

<p>l. The relationship between <code class="language-plaintext highlighter-rouge">Attrition</code> and <code class="language-plaintext highlighter-rouge">Evaluation</code></p>

<ul>

<li>There is a bimodal distribution for attrition.</li>

<li>Employees with <strong>low</strong> performance tend to leave the company more.</li>

<li>Employees with <strong>high</strong> performance tend to leave the company more.</li>

<li>The <strong>sweet spot</strong> for employees that stayed is within <strong>0.6-0.8</strong> evaluation.</li>

</ul>

<p><img src="https://duttashi.github.io/images/casestudy-hr-attrition-plt5.png" alt="plot5" /></p>

<p>Fig-5: Employee evaluation vs Attrition plot</p>

<p>m. The relationship between <code class="language-plaintext highlighter-rouge">Attrition</code> and <code class="language-plaintext highlighter-rouge">AverageMonthlyHours</code></p>

<ul>

<li>Another bi-modal distribution for attrition.</li>

<li>Employees who had less hours of work <strong>(~150hours or less)</strong> left the company more.</li>

<li>Employees who had too many hours of work <strong>(~250 or more)</strong> left the company.</li>

<li>Employees who left generally were <strong>underworked</strong> or <strong>overworked</strong>.</li>

</ul>

<p><img src="https://duttashi.github.io/images/casestudy-hr-attrition-plt6.png" alt="plot6" /></p>

<p>Fig-6: Average monthly hour worked vs Attrition plot</p>

<p><strong>Key Observations</strong>: The Fig-7, clearly represents the factors which serve as the top reasons for attrition in a company:</p>

<ul>

<li>Satisfaction level: it already had a negative correlation with the outcome. People with low satisfaction were most likely to leave even when compared with evaluations.</li>

<li>Salary and the role they played has one of the least impact on attrition.</li>

<li>Pressure due to the number of projects and how they were evaluated also holds key significance in determining attrition.</li>

<li>All features were deemed important.</li>

</ul>

<p><img src="https://duttashi.github.io/images/casestudy-hr-attrition-plt7.png" alt="plot7" /></p>

<p>Fig-7: Feature importance plot</p>

<ol>

<li><strong>Data modeling</strong></li>

</ol>

<p>Base model rate: recall back to <code class="language-plaintext highlighter-rouge">Part 4.1: Exploring the Data</code>, 24% of the dataset contained 1’s (employee who left the company) and the remaining 76% contained 0’s (employee who did not leave the company). The Base Rate Model would simply predict every 0’s and ignore all the 1’s. The base rate accuracy for this data set, when classifying everything as 0’s, would be 76% because 76% of the dataset are labeled as 0’s (employees not leaving the company).

The training data was split into 75% train set and 25% validation set. An initial logistic regression model based on all 10 independent variables (or features) was built on the train set. The model was tested on the validation set. An initial predictive accuracy of 78% was obtained.</p>

<p>Thereafter, I built four models based on the following classifiers, namely:</p>

<p>a. Classification And Regression Trees (CART),</p>

<p>b. Support Vector Machine (SVM),</p>

<p>c. k-nearest neighbor (knn) and</p>

<p>d. logistic regression</p>

<p>The CART, SVM and the KNN model gave an accuracy of over 98% on the training set. I chose the CART and the SVM model for testing. Both models yield an accuracy of 95.5% on the validation set, as shown in Fig-8.</p>

<p><img src="https://duttashi.github.io/images/casestudy-hr-attrition-plt8.png" alt="plot8" /></p>

<p>Fig-8: Predictive modeling results</p>

<p>From Fig-8, I chose the cart model as the final model. Thereafter, I tested this model on the <code class="language-plaintext highlighter-rouge">hr_attrition_test data</code>. Finally to conclude using the cart modeling technique, we can predict the employee attrition at an accuracy of <code class="language-plaintext highlighter-rouge">95.5%</code>.</p>

<p><strong>Summary</strong></p>

<ul>

<li>Employees generally left when they are <strong>underworked</strong> (less than 150hr/month or 6hr/day)</li>

<li>Employees generally left when they are <strong>overworked</strong> (more than 250hr/month or 10hr/day)</li>

<li>Employees with either <strong>really high or low evaluations</strong> should be taken into consideration for high attrition rate</li>

<li>Employees with <strong>low to medium salaries</strong> are the bulk of employee attrition</li>

<li>Employees that had <strong>2,6, or 7 project count</strong> was at risk of leaving the company</li>

<li>Employee <strong>satisfaction</strong> is the highest indicator for employee attrition.</li>

<li>Employee that had <strong>4 and 5 years at the company</strong> should be taken into consideration for high attrition rate</li>

</ul>

<p><strong>Code and Dataset</strong></p>

<ul>

<li>

<p>R code - <a href="https://github.com/duttashi/learnr/blob/master/scripts/Full%20Case%20Studies/CaseStudy-hr_attrition-EDA.R">Exploratory Data Analysis</a>, <a href="https://github.com/duttashi/learnr/blob/master/scripts/Full%20Case%20Studies/CaseStudy-hr_attrition-Predictive_Modelling.R">Predictive Modeling</a></p>

</li>

<li>

<p>Data - <a href="https://github.com/duttashi/learnr/blob/master/data/hr_attrition_train.csv">train data</a>, <a href="https://github.com/duttashi/learnr/blob/master/data/hr_attrition_test.csv">test data</a></p>

</li>

</ul>

<![CDATA[Scraping twitter data to visualize trending tweets in Kuala Lumpur]]>https://duttashi.github.io/blog/scraping-twitter-data-to-analyse-trends-in-KL2018-10-01T00:00:00+00:002018-10-01T00:00:00+00:00Ashish Dutthttps://duttashi.github.ioashishdutt@yahoo.com.myblog<p><em>(Disclaimer: I’ve no grudge against python programming language per se. I think its equally great. In the following post, I’m merely recounting my experience.)</em></p>

<p>It’s been quite a while since I last posted. The reasons are numerous, notable being, unable to decide which programming language to choose for web data scraping. The contenders were data analytic maestro, <code class="language-plaintext highlighter-rouge">R</code> and data scraping guru, <code class="language-plaintext highlighter-rouge">python</code>. So, I decided to give myself some time to figure out which language will be best for my use case. My use case was, <em>Given some search keywords, scrape twitter for related posts and visualize the result</em>. First, I needed the <em>live data</em>. Again, I was at the cross-roads, “R or Python”. Apparently python has some great packages for twitter data streaming like <code class="language-plaintext highlighter-rouge">twython</code>,<code class="language-plaintext highlighter-rouge">python-twitter</code>, <code class="language-plaintext highlighter-rouge">tweepy</code> and <a href="https://github.com/twintproject/twint">twint</a> (<em>Acknowledgment: The library twint was suggested by a reader. See comments section</em>). Equivalent R libraries are <code class="language-plaintext highlighter-rouge">twitteR</code>,<code class="language-plaintext highlighter-rouge">rwteet</code>. I chose the <code class="language-plaintext highlighter-rouge">rtweet</code> package for data collection over python for following reasons;</p>

<ul>

<li>I do not have to create a <code class="language-plaintext highlighter-rouge">credential file</code> (unlike in python) to log in to my twitter account. However, you do need to authenticate the twitter account when using the <code class="language-plaintext highlighter-rouge">rtweet</code> package. This authentication is done just once if using the <code class="language-plaintext highlighter-rouge">rtweet</code> package. Your twitter credentials will be stored locally.</li>

<li>Coding and code readability is far more easier as compared to python.</li>

<li>The <code class="language-plaintext highlighter-rouge">rtweet</code> package allows for multiple hash tags to be searched for.</li>

<li>To localize the data, the package also allows for specifying geographic coordinates.</li>

</ul>

<p>So, using the following code snippet, I was able to scrape data. The code has following parts;</p>

<ol>

<li>

<p>A custom search for tweets function which will accept the search string. If search string is <code class="language-plaintext highlighter-rouge">NULL</code>, it will throw a message and stop, else it will search for hash tags specified in search string and return a data frame as output.</p>

<p>library(rtweet)

library(tidytext)

library(tidyverse)

library(stringr)

library(stopwords)

library(rtweet) # for search_tweets()</p>

</li>

<li>

<p>A data frame containing the search terms. Note, here my search hash-tags are <code class="language-plaintext highlighter-rouge">KTM</code>, <code class="language-plaintext highlighter-rouge">MRT</code> and <code class="language-plaintext highlighter-rouge">monorail</code>.</p>

</li>

</ol>

<p>Create a function that will accept multiple hashtags and will search the twitter api for related tweets</p>

<div class="language-plaintext highlighter-rouge"><div class="highlight"><pre class="highlight"><code>search_tweets_queries <- function(x, n = 100, ...) {

## check inputs

stopifnot(is.atomic(x), is.numeric(n))

if (length(x) == 0L) {

stop("No query found", call. = FALSE)

}

## search for each string in column of queries

rt <- lapply(x, search_tweets, n = n, ...)

## add query variable to data frames

rt <- Map(cbind, rt, query = x, stringsAsFactors = FALSE)

## merge users data into one data frame

rt_users <- do.call("rbind", lapply(rt, users_data))

## merge tweets data into one data frame

rt <- do.call("rbind", rt)

## set users attribute

attr(rt, "users") <- rt_users

## return tibble (validate = FALSE makes it a bit faster)

tibble::as_tibble(rt, validate = FALSE)

}

</code></pre></div></div>

<ol>

<li>

<p>Using the <code class="language-plaintext highlighter-rouge">search_tweets_queries</code> defined in step 1, to search for tweets. Note, the usage of <code class="language-plaintext highlighter-rouge">retryonratelimit=TRUE</code> indicates if search rate limit reached, then the crawler will sleep for a while and start again. Refer to the <code class="language-plaintext highlighter-rouge">rtweet</code> <a href="https://rtweet.info/">documentation</a> for more information.</p>

<div class="language-plaintext highlighter-rouge"><div class="highlight"><pre class="highlight"><code> df_query <- data.frame(query = c("KTM", "monorail","MRT"),

n = rnorm(3), # change this number according to the number of searchwords in parameter query. As of now, the parameter got 3 keywords, therefore this nuber is set to 3.

stringsAsFactors = FALSE )

df_collect_tweets <- search_tweets_queries(df_query$query, include_rts = FALSE,retryonratelimit = TRUE,

#geocode for Kuala Lumpur

geocode = "3.14032,101.69466,93.5mi")

</code></pre></div> </div>

</li>

<li>

<p>Once the data is collected, I’ll keep some selected columns only.</p>

<div class="language-plaintext highlighter-rouge"><div class="highlight"><pre class="highlight"><code> df_select_tweets<- df_collect_tweets %>%

select(c(user_id,created_at,screen_name, !is.na(hashtags),text,

source,display_text_width>0,lang,!is.na(place_name),

!is.na(place_full_name),

!is.na(geo_coords), !is.na(country), !is.na(location),

retweet_count,account_created_at, account_lang, query)

)

</code></pre></div> </div>

</li>

<li>

<p><strong>Text mining</strong>: The collected data need to be cleaned. Therefore, I’ve used the basic <code class="language-plaintext highlighter-rouge">gsub()</code> function and <code class="language-plaintext highlighter-rouge">str_replace_all()</code> from the <code class="language-plaintext highlighter-rouge">stringr</code> library.</p>

<div class="language-plaintext highlighter-rouge"><div class="highlight"><pre class="highlight"><code> # Saving the selected columns data

> df_select_tweets_1 = data.frame(lapply(df_select_tweets, as.character), stringsAsFactors=FALSE)

### Text preprocessing

# 1. Remove URL from text

# collapse to long format

> clean_tweet<- df_select_tweets_1

#clean_tweet<- paste(df_select_tweets_1, collapse=" ")

> clean_tweet$text = gsub("&amp", "", clean_tweet$text)

> clean_tweet$text = gsub("(RT|via)((?:\\b\\W*@\\w+)+)", "", clean_tweet$text)

> clean_tweet$text = gsub("@\\w+", "", clean_tweet$text)

> clean_tweet$text = gsub("[[:punct:]]", "", clean_tweet$text)